This Isn’t a Blog.

It’s a Technical Playbook for Leaving the Cloud Oligopoly.

Every article in this resource center is engineered for one audience: CTOs, VP-Engineering leaders, and AI researchers who have hit the ceiling of what hyperscale cloud providers can offer — and are paying a premium for the privilege. We don’t publish thought-leadership fluff. We publish operational blueprints.

Inside, you’ll find deep technical analysis on high-performance GPU cloud architecture, real-world migration frameworks for moving petabyte-scale training workloads without downtime, and transparent cost breakdowns that expose the true total-cost-of-ownership gap between shared virtualized instances and dedicated bare-metal deployments. From NVIDIA Blackwell B200 hosting configurations to single-tenant security models that eliminate noisy-neighbor risk, each piece is designed to give your team the intelligence it needs to make infrastructure decisions with confidence — not vendor lock-in. Consider this your technical roadmap for scaling AI model training infrastructure without the hyperscaler tax.

Bare-Metal AI Infrastructure: Max Throughput, Zero Bloat

Bare-Metal AI Infrastructure: Maximum Throughput, Zero Virtualization Bloat When training multi-billion parameter LLMs, compute is only half the equation; the speed at which your nodes communicate dictates your actual time-to-value. For elite engineering teams,...

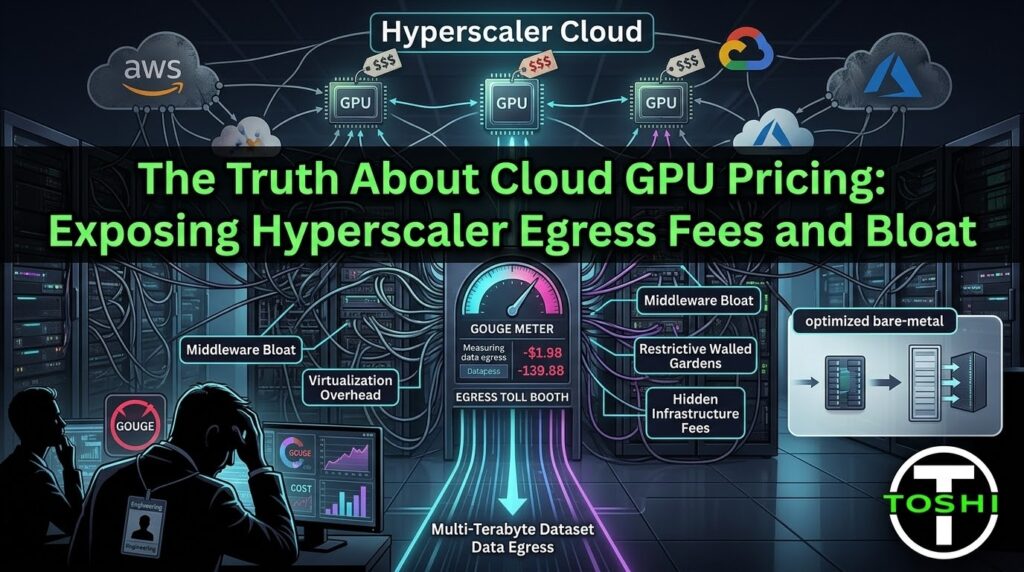

Cloud GPU Pricing: Exposing Egress Fees & Bloat

The Truth About Cloud GPU Pricing: Exposing Hyperscaler Egress Fees and Bloat If you are a CTO overseeing massive machine learning pipelines, you already know that the sticker price of compute is a lie. When auditing cloud GPU pricing, the hourly rate is merely the...

NVIDIA Blackwell B200 Availability: Skip the Waitlists

NVIDIA Blackwell B200 Availability: How to Skip the Hyperscaler Waitlist In the AI arms race, compute is the primary bottleneck. For VPs of Engineering and Lead Researchers, there is nothing more frustrating than having the budget, the talent, and the data, but...

Migrating AI Workloads: Zero Downtime, Zero Lock-In

Migrating AI Workloads: Why Transitioning to ToshiHPC Won't Stall Your Roadmap When you have dozens of engineers building complex pipelines, the thought of migrating AI workloads to a new infrastructure provider feels like scheduling a root canal. CTOs inherently...

AI Model Data Security: Leaving Hyperscalers Safely

The CTO's Guide to AI Model Data Security: Escaping the Hyperscaler Walled Garden For modern CTOs and Lead AI Researchers, proprietary data and trained model weights are your ultimate competitive moat. When evaluating a migration away from AWS, GCP, or Azure, the...

AWS is How with AI OR Is there Something Better for Small and Medium Sized Businesses?

"AWS is How" [with AI] OR Is There Something Better for Small and Medium Sized Businesses? You’ve seen the commercials. You’ve heard the Rolling Stones’ Jumpin' Jack Flash blasting while sleek montages show how AWS is How Nasdaq provides transparency, or how...