by Chris Bernard | Mar 20, 2026 | Uncategorized

Bare-Metal AI Infrastructure: Maximum Throughput, Zero Virtualization Bloat When training multi-billion parameter LLMs, compute is only half the equation; the speed at which your nodes communicate dictates your actual time-to-value. For elite engineering teams,...

by Chris Bernard | Mar 20, 2026 | Uncategorized

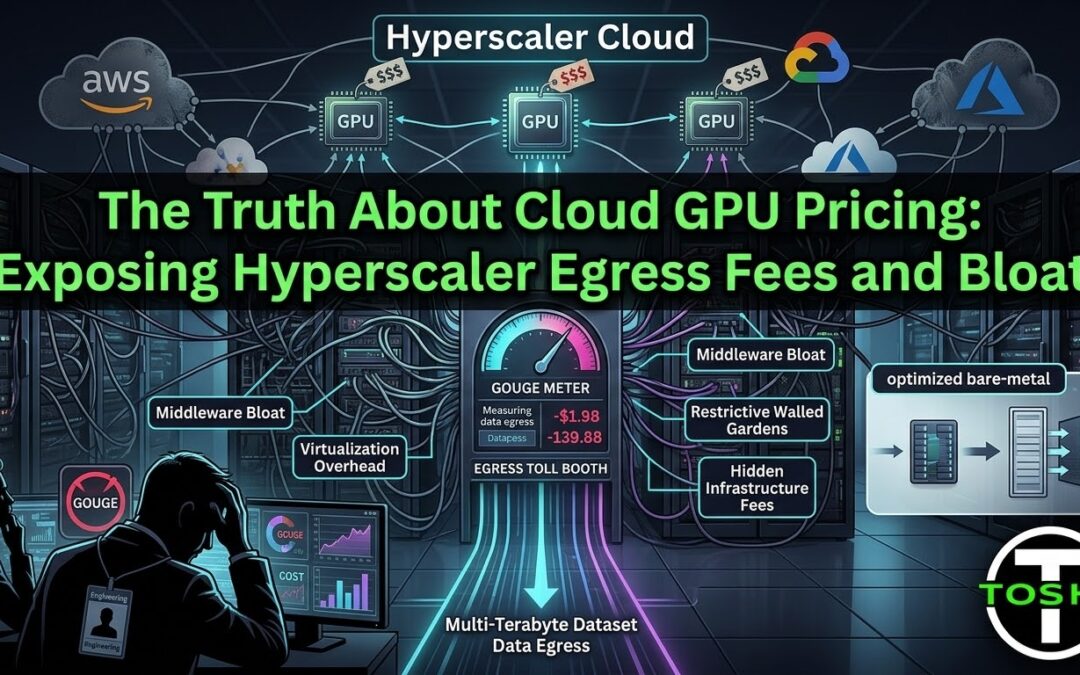

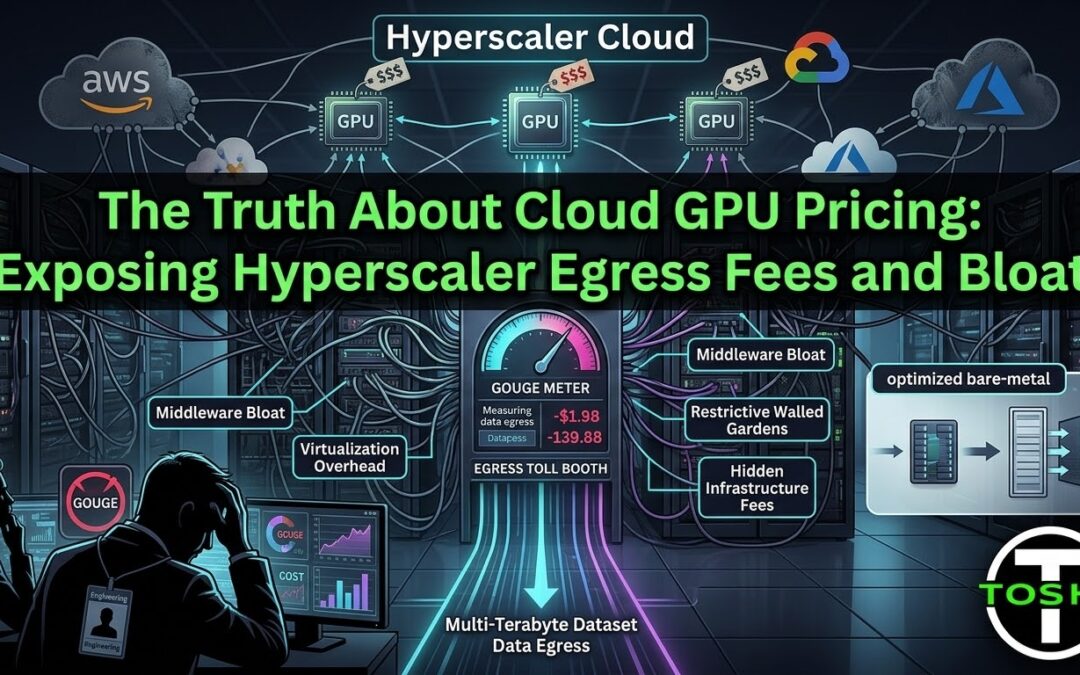

The Truth About Cloud GPU Pricing: Exposing Hyperscaler Egress Fees and Bloat If you are a CTO overseeing massive machine learning pipelines, you already know that the sticker price of compute is a lie. When auditing cloud GPU pricing, the hourly rate is merely the...

by Chris Bernard | Mar 20, 2026 | Uncategorized

NVIDIA Blackwell B200 Availability: How to Skip the Hyperscaler Waitlist In the AI arms race, compute is the primary bottleneck. For VPs of Engineering and Lead Researchers, there is nothing more frustrating than having the budget, the talent, and the data, but being...

by Chris Bernard | Mar 20, 2026 | Uncategorized

Migrating AI Workloads: Why Transitioning to ToshiHPC Won’t Stall Your Roadmap When you have dozens of engineers building complex pipelines, the thought of migrating AI workloads to a new infrastructure provider feels like scheduling a root canal. CTOs...

by Chris Bernard | Mar 20, 2026 | Uncategorized

The CTO’s Guide to AI Model Data Security: Escaping the Hyperscaler Walled Garden For modern CTOs and Lead AI Researchers, proprietary data and trained model weights are your ultimate competitive moat. When evaluating a migration away from AWS, GCP, or Azure,...